What comes after web literacy?

I was having a conversation with someone recently who holds a senior role at an edtech organisation. We both agreed that the equivalent of Mozilla Webmaker these days would not be teaching children HTML, CSS, and JavaScript. Instead, we would be teaching them how to understand and use AI tools to achieve their ends. This is still literacy.

In presentations around digital literacies, I've often talked about the historical importance of 'view source' on web pages as helping teach people how the web works. That, of course, doesn't really work any more given the complexity of modern web pages, but the principle is the same: what's the layer beneath the interface?

Nowadays, when the layer beneath is AI, what's the equivalent of 'view source'? What comes after web literacy?

The Webmaker era was replaced by the Platform era

I won't rehash what I discussed in my recent post Beyond Elegant Consumption (Again) but just to say that, as Karen Smith and I wrote in a Webmaker whitepaper, polished experiences often leave people as passive users rather than active participants. AI is the definition of polished output, almost no matter what the input.

While we want interfaces that delight and surprise us, when they're locked down and there's no way to look under the hood, we're trapped as mere consumers of other people's content. This was the argument for web literacy: learn HTML, CSS, and JavaScript so that you can help build the web, not just consume it .

But in the time since I was at Mozilla, the platforms won. The everyday experience of computing for most people has changed from files and folders to algorithmic feeds. Something which seemed so foundational: a filesystem, on hardware you control, seems alien to people who have grown up on iOS and Google Drive.

You're running out of space on your phone? No problem, iCloud will happily “optimise” your local storage – i.e. quietly move your files to Apple's servers. It's done so seamlessly that most users have no idea what's actually happening in the background.

It's not like there's no layer beneath the interfaces that we use in 2026, it's just that they're buried. You can still view source, but good luck finding anything intelligible with a React-built platform with minified JavaScript and third-party scripts loading from six different domains.

So perhaps it's worth differentiating between the technical layer and the cognitive layer. What can a curious person understand about a system these days?

Moving into the AI era

Whereas platforms went out of the way to hide the source so it couldn't be viewed, we're now in a situation where the source cannot be inspected at all.

There is no 'view source' with a large language model (LLM). You enter a prompt and get an output, between which lies a statistical model (i) trained on data you didn't choose, (ii) governed by weights you (usually) can't see, (iii) running on hardware you do not own, and (iv) operated by a company you don't control. It's not just inaccessible, it's impenetrable.

As it's a statistical model and not deterministic, it's different to what came before. For example, we ran Webmaker parties where people could follow guidance on how to use HTML, CSS, and JavaScript. We knew that entering these things ended up with these consequences. That's not true with LLMs.

Depending on system prompts, temperature settings, and post-processing features, LLMs behave differently. Look at the difference between what you get when using a tool like Convene the Council – which is more explicit about these differences.

This is, of course, a very different problem to the platform era. When dealing with platforms, there was still some way of figuring out what was going on under the hood. Not so with AI.

That means, in turn, that the literacy questions changes. While web literacy helped us read the technical and conceptual layer beneath the page, AI literacy has to ask something else – namely, whether you even recognise at which layer you are operating.

Literacy as layer navigation

I'm not against frameworks per se – I helped create one for BBC R&D only recently – but I'm against the kind of framework fundamentalism which suggests that frameworks are anything other than useful approximations. What I want to offer is a way of thinking.

One way to think of digital literacies is not as a single competency or bundle of skills, but as a capacity to move between layers of abstraction. To be able to do this you require an awareness of the cost/benefit of each layer.

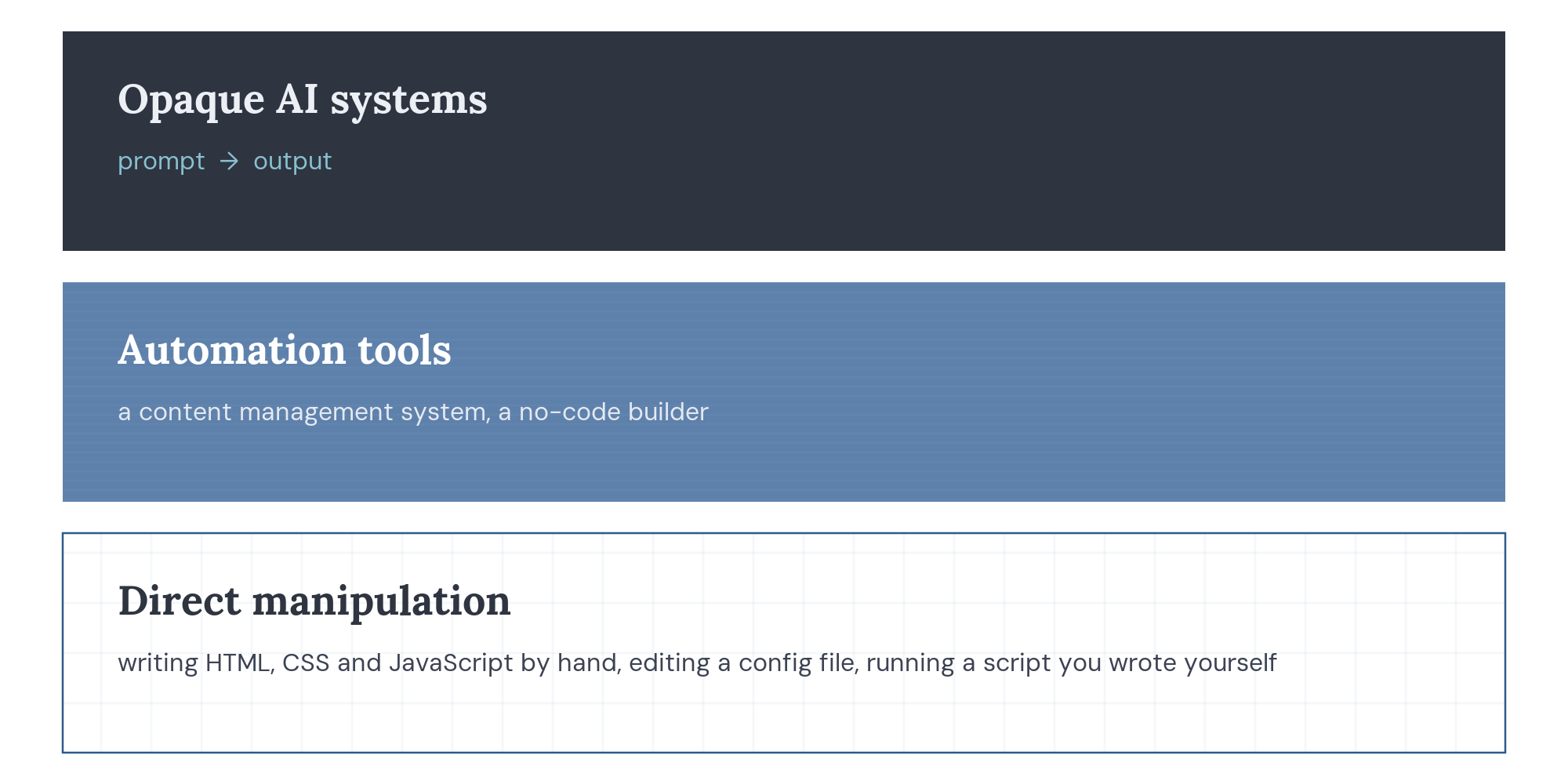

For example, if we skip the 'bare metal' level, at the bottom layer you have the direct manipulation of digital material – e.g. writing HTML, CSS, and JavaScript by hand. Editing a configuration file, or running a script that you wrote yourselves. This is a slow, error-prone approach that requires technical knowledge and gives maximum agency.

At the next layer up, you have tools that automate parts of that work. For example, a content management system, or a no-code builder. You trade some control for speed, with the question being whether you know what the trade-offs are.

Then, at the top layer, you have fully opaque systems where you type a prompt and receive an output. You have zero visibility into how the output was produced, and you can't check it against the source material. The cost here isn't just control and agency, but the ability to judge whether the output is any good.

So literacy, on this account, becomes not the ability to operate at the bottom layer all the time. That would be somewhat anachronistic. It's the ability to know which layer you're at, or should be working at, and recognise when to move up and down the stack.

What's next?

I'm just thinking aloud in this post, realising that the conversation I mentioned at the start of this post means that I need to be thinking differently about the work I've done before.

Recently, I've been building small tools lately which I'm referring to as folk software. They're tools made for one person, or a handful of people, with no real attempt to scale. Building this kind of software, which I could never do if I had to write each line of code by hand, has made me very aware of the different layers of the stack.

I know that the bottom layer is where full agency lives. The upper layers, though, are where most people spend most of their time. And so while digital literacies require an awareness of the whole stack, there needs to be an understanding that absolute fluency at all levels isn't required for a working, pragmatic literacy.

This is where I'm at at the moment. I don't think that what comes after web literacy is necessarily a new set of technical skills or a checklist of competencies. It's more the conceptual ability to know which layer you're working at, and whether you've got work to do to understand, and potentially be able to operate at, the other layers.

What do you think?