Claude Code used to be the obvious choice

I can't tell you how much I've enjoyed creating folk software over the past few months. You can see a bunch of apps over at dynamicskillset.com/tools

I created the vast majority of these with Opus 4.6, paying $100/month for the Claude Max 5x plan. It was fast, capable, and had defaults like /handoff and /catchup making it really easy to use.

While I had already experimented with alternatives (no-one likes vendor lock-in!) I have to say that I've been disappointed by Opus 4.7. It feels slower and less capable than 4.6. Enough so that the convenience I was getting from Claude is no longer enough to compensate for my discomfort about depending so heavily on one US platform.

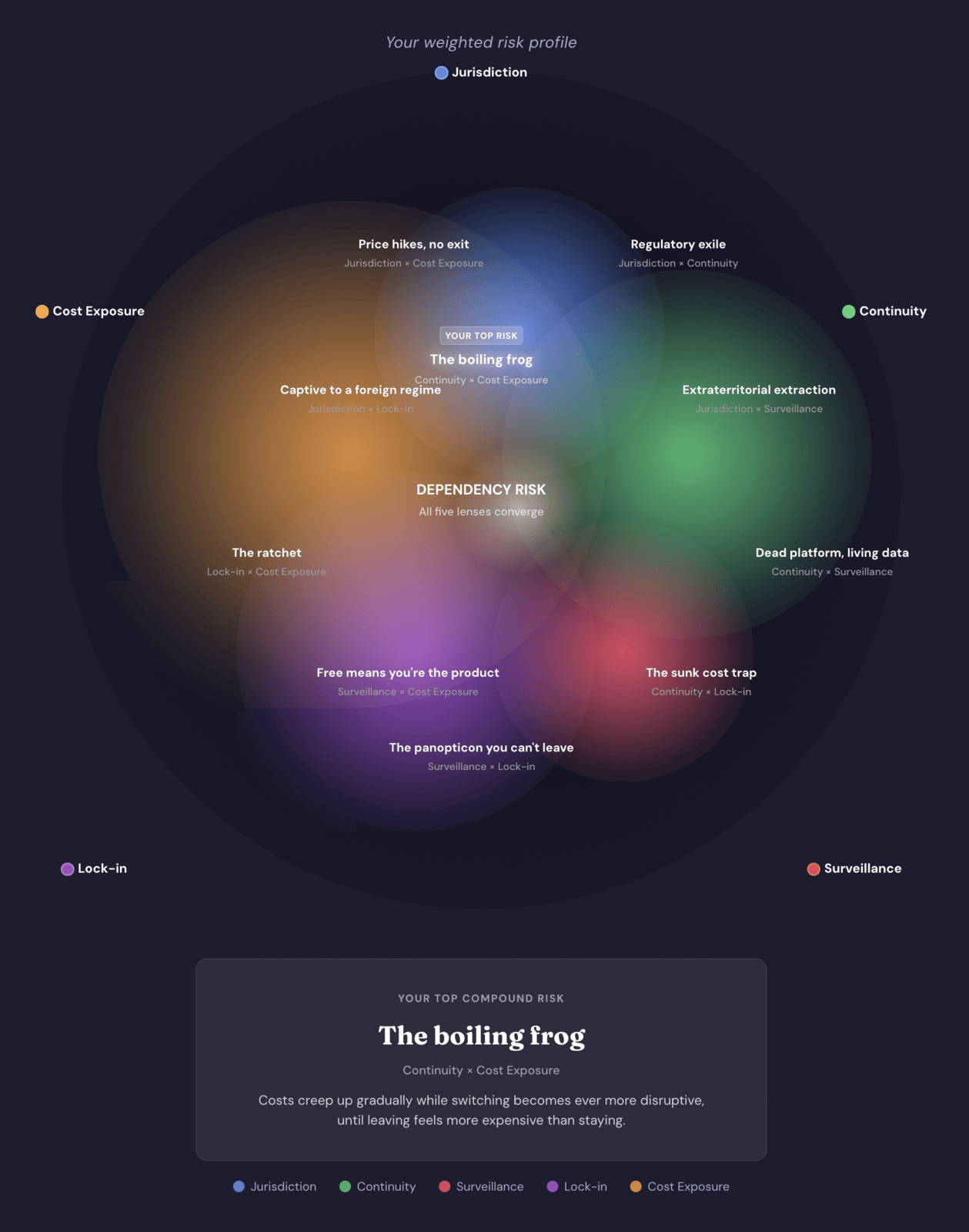

That's particularly true given that, through TechFreedom, I've been helping organisations move away from US-based Big Tech. I started to feel a bit like a potentially-boiled frog.

Tom and I came up with these lenses for social purpose organisations thinking about cloud platforms and infrastructure. But, sitting in front of Claude Code most days, it became hard not to apply the same questions to my own tools. It turns out that, once you have language for these risks, continuing as if your own dependencies are exempt starts to feel... weird.

What my setup looks like now

I've downgraded my Claude plan to the regular 'Pro' tier so that I can still use Frontier models with their huge context windows for Product Requirements Documents (PRDs).

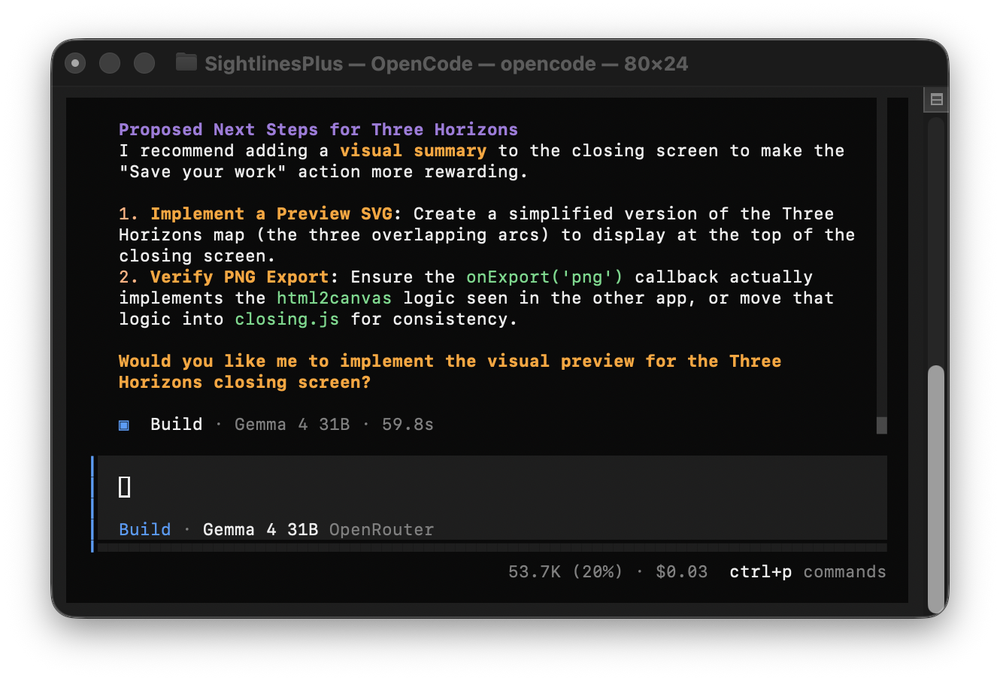

The actual development work is handled via OpenCode plus OpenRouter, mostly in the terminal. I get access to multiple models via OpenRouter, and can also use OpenCode to run local models.

Right now, until my new Mac Studio M2 Ultra arrives, I'm using models like Qwen 3.6 Plus and Gemma 4 31B for day to day coding work. You can compare which models might work for you using Tom's excellent new Bearing tool.

I've configured OpenCode to behave in ways that are similar to what I liked about Claude – including the /handoff and /catchup commands. One plugin I've used heavily over the past few months, Impeccable, works using any AI tool, so that was easy to continue using.

Working in the terminal only, rather than via graphical interfaces, feels a bit like choosing a stripped back text editor instead of a fully loaded word processor. While you lose some of the gloss, in exchange you gain laser focus, and faster performance. It's great.

What's next?

I've had a good response to my Sightlines systems thinking tools, so I've started work on a series of 10 more advanced tools which organisations will be able to use together over a period of time. These will almost certainly be paid-for.

I'm well on my way to removing my dependence on US-based Big Tech. Yes, I'm still using some Apple devices. Yes, my house still has Google displays, and my Polestar 2 has the Android Automotive built-in, but I'm being much more intentional about my choices.

Questions I'm now asking

I've written this post not because I want to persuade you to do similar, and not because I'm flexing any tech skills (lol). What I'm trying to do is model the kind of questions I ask about AI tools.

When a new model or AI assistant appears, I'm not just looking at benchmarks and context window size, but also at whose jurisdiction it sits in, how it treats continuity, what data it collects, how easy it would be to leave, and how volatile its pricing is likely to be.

You don't have to pick a single vendor and live inside their world. Open and configurable used to mean terrible UX; I'm delighted to say that's no longer the case.